Beyond Guardrails: Using Semantic Layers for AI Data Authorization

Louis-Philippe Perron

Most tutorials on giving an LLM agent access to a database for applications like ‘chat with your data’ treat security as a prompt engineering problem. They add system instructions telling the model not to query outside the current tenant, filter outputs for sensitive data, and defend against prompt injection. These are all worthwhile, but they share a fundamental limitation: they rely on the LLM to enforce the rules.

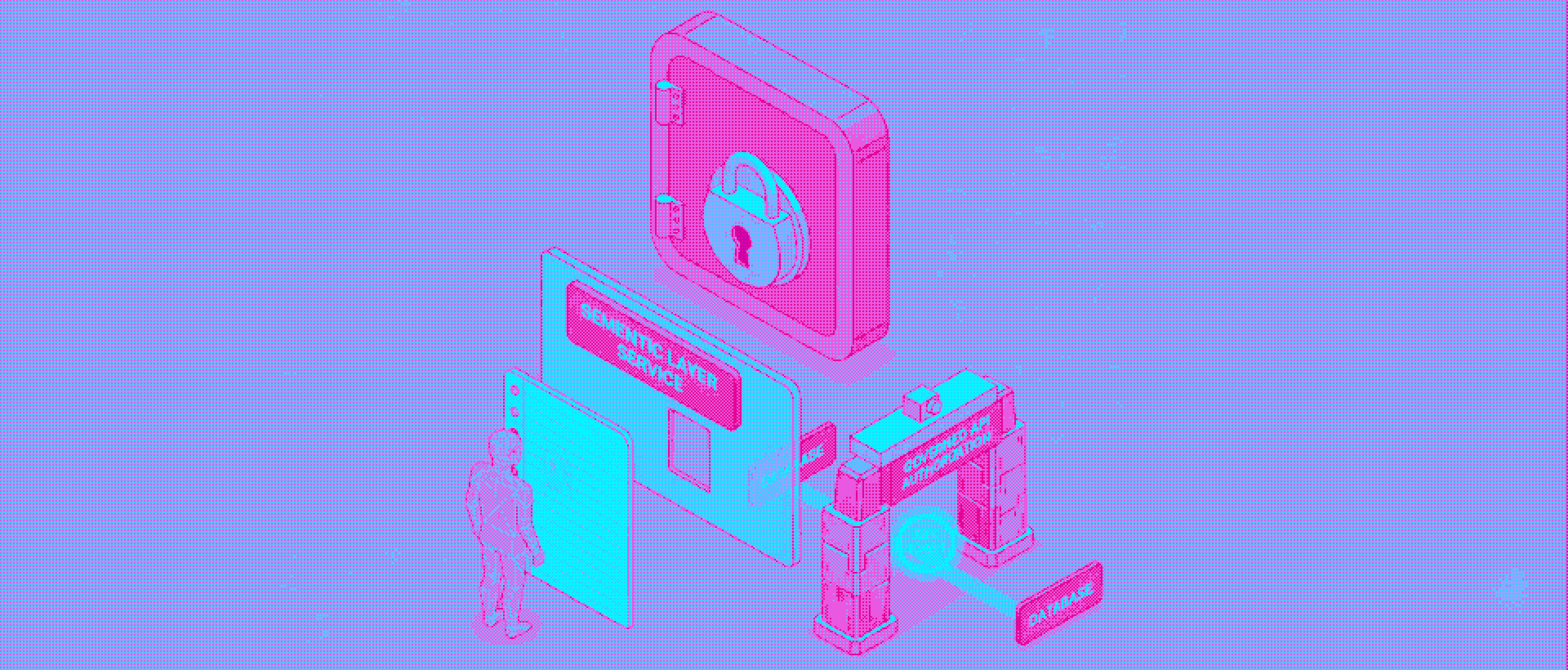

In a recent project building a conversational agent over healthcare practice data, we took a different approach. Rather than hoping the model would respect access boundaries, we placed a semantic layer between the agent and the database that enforces tenant isolation at the API level. The LLM never touches the database directly. User tokens flow through the entire agentic workflow, and authorization is validated before any query executes. The result: the model doesn’t need to “behave” because it structurally cannot access what it shouldn’t.

The Prompt Guardrail Problem

The standard “talk to your data” pattern is straightforward: user asks a question, LLM translates it to SQL, query runs, results come back. To handle multi-tenant access control, you add prompt instructions like “only query tables belonging to tenant X” or post-process outputs to catch anything that shouldn’t be there. The problem is that these are soft constraints. The LLM is still generating raw SQL with full database access underneath. If a prompt injection manipulates the model’s instructions, if the model misinterprets scoping rules, or if a schema edge case produces an unexpected join, the wrong data can surface. In a healthcare context with sensitive personal and financial records, “it mostly works” isn’t a security posture. It’s the difference between telling someone “please don’t open that door” and not giving them the key.

What a Semantic Layer Changes

A semantic layer sits on top of the database and exposes a governed API instead of raw table access. It defines a curated data model including dimensions, measures, relationships, and only allows queries expressed in those terms. The tool we used in this case is Cube, though the pattern applies to any semantic layer that supports token-based authorization. In our case, Cube sat on top of a Redshift warehouse containing healthcare data sourced from EHRs and other systems. The key shift: our LLM agent doesn’t generate SQL or touch the database directly. Instead, it orchestrates a set of tools—one to retrieve relevant views from vectorized metadata, another to construct valid Cube queries, and a third to execute them through the Cube API. Cube translates those requests into SQL internally, applying authorization rules before anything executes. The agent works through a constrained interface, not against an open database.

This has an added benefit beyond security. When an agent is pointed at a raw database, it has to reason about tables, joins, and column naming conventions designed for DBAs, not language models. A semantic layer’s curated model provides a smaller, well-defined interface with human-readable descriptions. By vectorizing that metadata into a retrieval system, we saw roughly 98% accuracy in surfacing the correct views for a given question—the well-structured semantic model made retrieval significantly easier than working against raw schema would have been.

How Authorization Flows Through the Stack

The mechanism is token propagation through the full agentic workflow. The user authenticates through the frontend (Vercel) via Supabase, which issues a JWT with identity and tenant claims. When the user asks a question, that JWT travels with the request through the agent’s tool calls—semantic search, schema retrieval, query construction—all the way to the Cube API.

Cube’s authorization layer validates the token and uses the tenant claims to scope every query. Even if the LLM constructs a request for data outside the user’s scope, Cube rejects it or filters results down to what the token permits. The LLM doesn’t need to know about tenant boundaries. It queries the semantic layer, and the semantic layer decides what comes back.

To validate this, we deployed two parallel applications against the same backend: a public demo restricted to Medicare data and a private client version with full access. The public app could not retrieve private client data through any query path, confirming tenant isolation independent of what the LLM attempted.

Practical Tradeoffs

This approach isn’t free, and some of what we learned is worth flagging for anyone considering a similar architecture.

Latency matters

Adding a semantic layer hop introduces overhead. We initially tried Cube’s own AI inference API for query generation, but response times consistently exceeded 60 seconds with poor answer quality. The solution was to keep Cube as the authorization and execution layer but replace its query generation entirely. Vectorizing Cube’s view metadata for retrieval and using a dedicated LLM trained on Cube’s query syntax to construct the actual queries was the direction we took. That brought latency under 15 seconds. The semantic layer itself wasn’t the bottleneck, but how you handle query generation against it is a design decision that directly affects user experience.

Model maintenance is real.

As your schema evolves, the semantic model needs to stay in sync. We found that gaps in our metadata descriptions accounted for a portion of query construction failures in evaluation. Keeping the semantic layer well-documented is ongoing work, not a one-time setup.

Authorization isn’t the full threat surface.

You still need prompt injection defenses, input validation, and output filtering. We added a categorizing LLM at the entry point to classify incoming questions and route them appropriately, which doubled as a defense against injection attempts reaching the main agent. The semantic layer handles authorization; it doesn’t replace the rest of your security stack.

The Key Insight

If you’re building agentic access to multi-tenant data, push authorization down the stack. Don’t rely on the LLM to enforce access control through prompt instructions. A semantic layer gives you a governed API that the agent works through, not around—validating every request against the user’s actual permissions before anything touches the database. The pattern: authenticate the user, issue a token with the right claims, propagate it through your agent’s tool calls, and let the semantic layer validate on every request. The LLM becomes a translation layer between natural language and a governed API—which is a much more appropriate role for it than acting as both query generator and security enforcer.